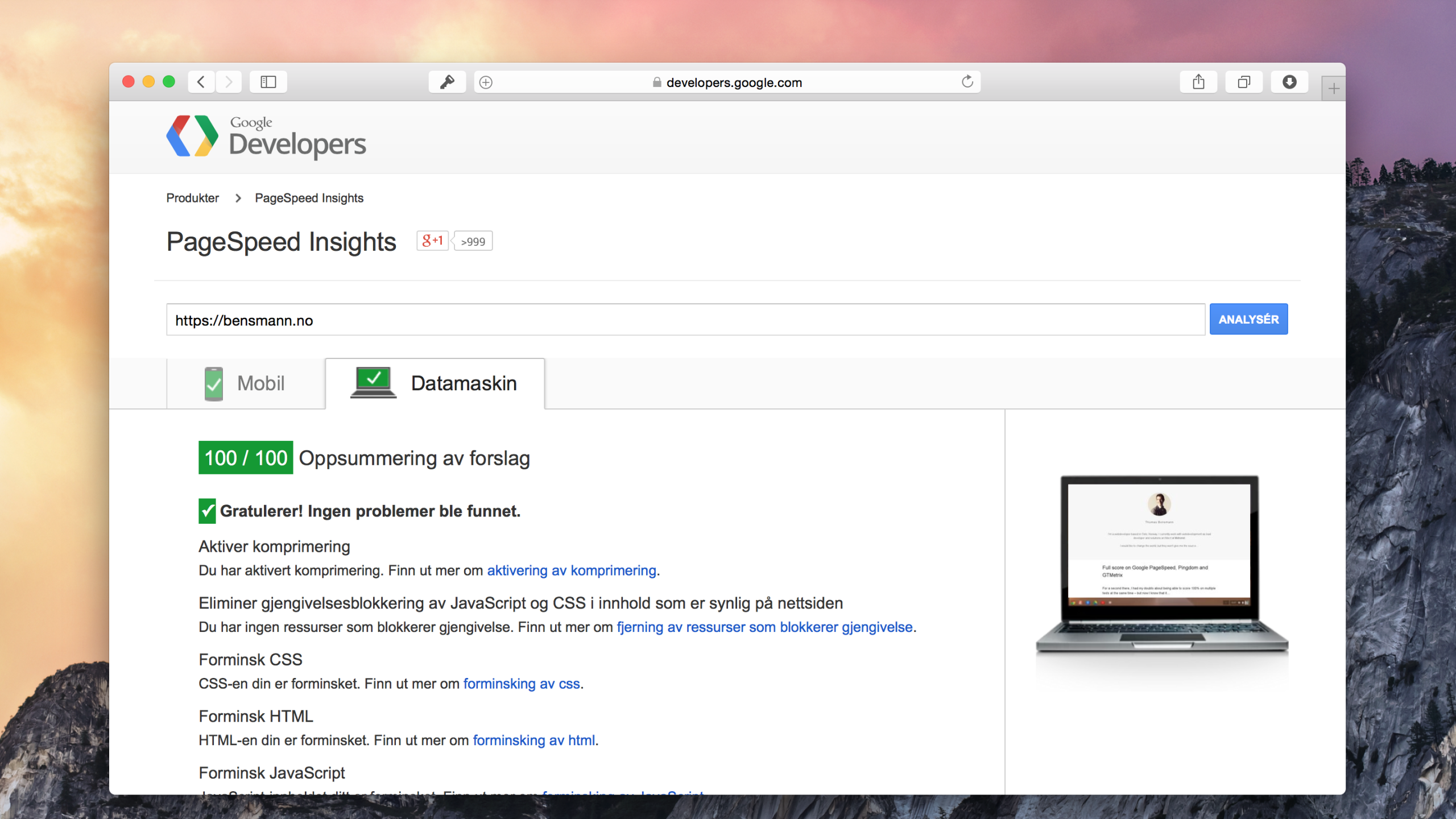

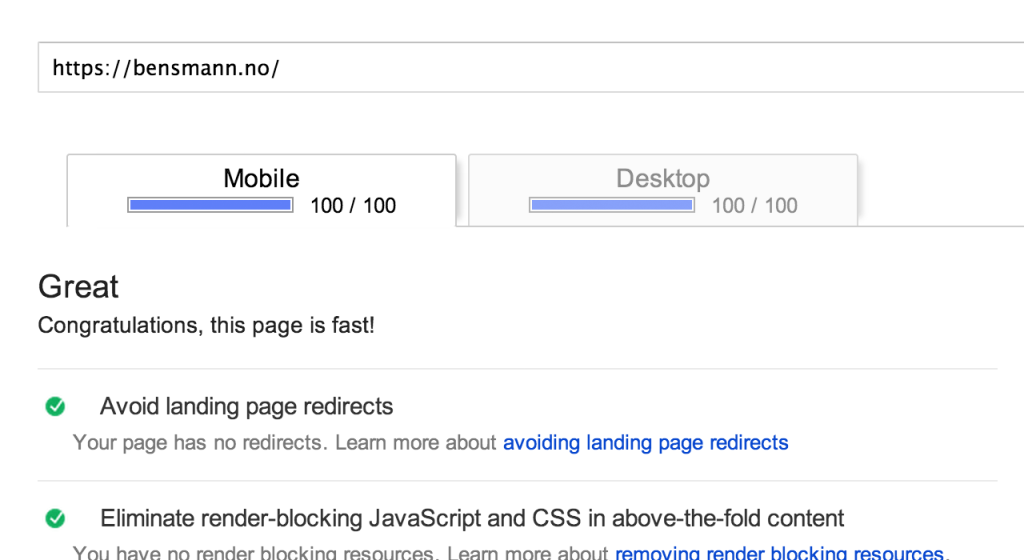

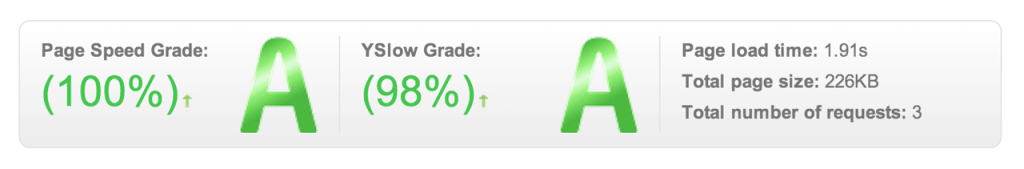

For a second there, I had my doubts about being able to score 100% on multiple tests at the same time – but now I know that it can be done. The first 100% was from Pingdom, after which I started on the GTMetrix test. The problem was that by the time I had 99% on GTMetrix, my Google PageSpeed score had dropped significantly. Now though, after more optimizing, all 3 are giving me the thumbs up following my new server setup.

The result

Lets start by looking at the results…

Google PageSpeed – link

Pingdom speedtest – link

GTMetrix – link

Note the YSlow score is only at 98% because I don’t use a CDN. All other criteria have been met.

What needed to be done

So, what did it take to get here? My stack, which I only setup recently is running a combination of nginx, varnish, apache and PHP 5.5 (fpm). Nginx for serving static files and handling SSL with SPDY, Varnish for cache and Apache for the creation of pages. Here are some of this things that needed tweaking …

( if interested in setting up a setup like this, I recommend the posts on bjornjohansen.no by @bjornjohansen )

Minify and optimize

This one we all know. Everything needs to be minified, HTML, CSS, JS, everything. One thing to note here is that in order to get a 100%, you have to squeeze out every byte – that includes useless tags in HTML. At one point, the only thing standing between me and a 100% score at GTMetrix was 7 bytes!

I use and recommend yui-compressor for CSS and JS, and using the google pagespeed_mod for HTML minification.

But it doesn’t stop there, also image files can stand having a few bytes squeezed out. Optimizing images is easily done manually by using a variety of applications or even installing libraries on your server that will handle this. In my case, I have a folder on my mac in which all files automatically get optimized using ImageOptim.

Combine

Its all about reducing the amount of requests. Combine all your CSS and JS respectively, if you need some JS early on and the files are small … inline it! The rest should go into the footer. For this site i used a minit by @konstruktors for combining CSS and JS.

Also while we are discussing the need to reduce request, lets not forget that you can save quite a lot of traffic by lazy loading images! For this I recommend trying the awesome BJ Lazy Loading plugin for WordPress by @bjornjohansen.

Compress

We can save bandwidth without making any real changes, just by gzipping files before they are served. Its simple and effective even though it takes close to no time to implement.

Cache

First of all, Google wants your pages to be snappy … if it takes more than 250ms it will start complaining. In my case Varnish will mostly take care of this. Second of all, browser caching – caching at client side, is just as important. Setting files to never expire (or as close to never as we can) and giving proper headers will save even more bandwidth.

Inline(?!)

This is a tough one to swallow for me, Google PageSpeed makes a big deal about render blocking CSS and JS. With JS this is fair enough. Move it into the footer of your page and often your problem is solved, but our CSS is only supposed to be declared in HEAD. Google’s solution to this is to inline critical CSS and load the rest after it has rendered the page. Crazy, I know … this is why I currently only do this on my front page. It makes the page render faster, but it causes a flash of semi-styled content on every page load. Without it, there’s no way to get a 100% on Google’s PageSpeed for mobile.

Conclusion

All in all I would say that these speed test are a good indicator, and one should strive to get good results. Especially the Pingdom and GTMetrix tests are very valuable in my opinion. Google though seems to be taking it a little far. Either way, there is a lot here to learn…